May 2026

Can AI Hire the Right Person?

.

-Or is it just seeing Digital Ghosts?

.

.

Papillon Executive Search has received permission from Espen Skorstad to republish this article. We strongly support the perspectives and conclusions outlined herein — particularly the recognition that human involvement in recruitment will continue to have an essential role to play.

Technology can undoubtedly improve decision support. It should not, however, be the sole determinant of who gets the job.

When the renowned music producer Juan Atkins first heard Kraftwerk in 1981, he was reportedly so overwhelmed that “it felt like a UFO had landed on my turntable.” He had heard the sound of the future.

Many recruitment professionals must have felt something similar when they first adopted large language models in the early 2020s. What began as a curiosity has, in just a few years, transformed recruitment from a largely manual discipline into one defined by integrated systems, AI agents, and entirely new regulatory demands.

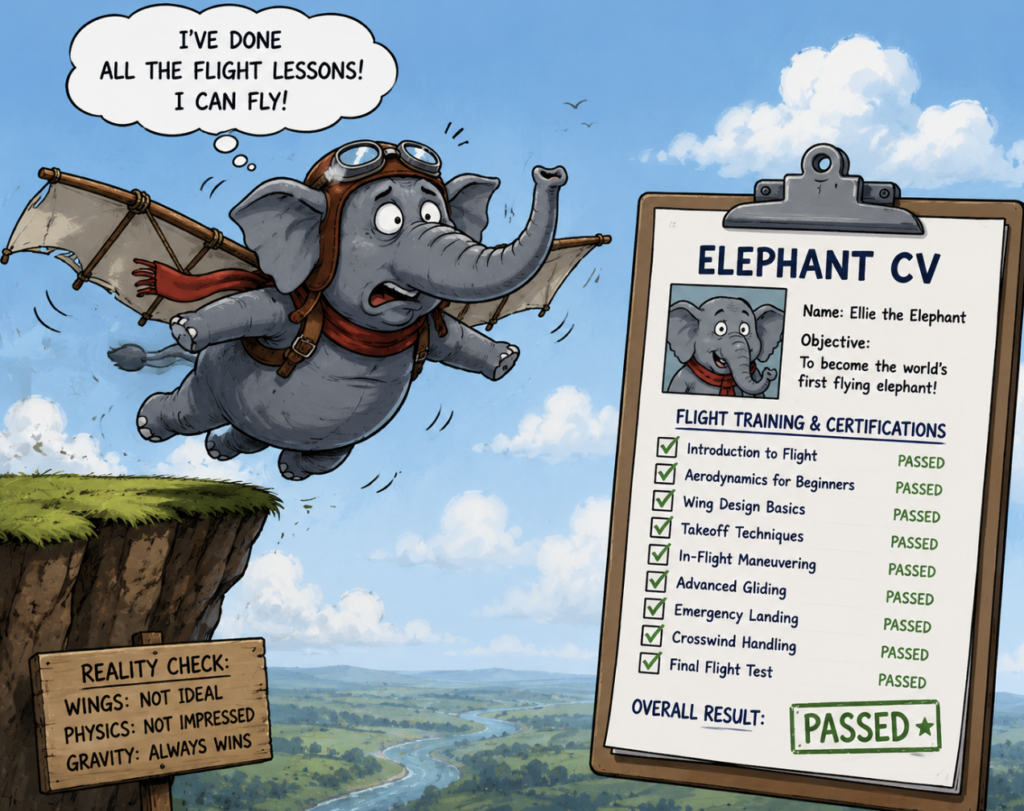

AI is now used in almost every part of the recruitment process. Between 50 and 80 percent of job seekers use such tools to write applications and tailor CVs. The appeal is obvious: they save time and can significantly improve quality. But they also introduce clear drawbacks. As more candidates rely on services such as LazyApply, applications and CVs increasingly begin to resemble one another.

As a result, distinguishing between candidates becomes far more difficult.

At the same time, employers are using AI to review and sort those same documents. Early systems relied on simple keyword matching. Today’s more advanced platforms use language models capable of interpreting meaning and context, not merely individual words. They can therefore identify relevant competencies even when candidates do not use the “right” terminology.

This creates a paradox. Candidates use AI to generate increasingly polished applications, while employers use AI to cut through that same automated volume. The result is a technological arms race in which AI not only streamlines the process, but also shapes the very criteria by which applicants are assessed.

That naturally raises the question of whether the traditional application and CV still hold the same value they once did.

AI has also gained ground in candidate selection itself. This is particularly evident in so-called asynchronous video interviews, where candidates respond via camera and microphone without an interviewer being present. The format creates greater standardization, but also removes follow-up questions, clarifications, and much of the natural dynamic found in a conventional interview.

The advantages are real. AI can improve efficiency and consistency. Algorithms are not influenced by mood, fatigue, or what Daniel Kahneman described as “noise.” This points back to a longstanding insight in psychology: as early as 1954, Paul Meehl demonstrated that when humans and a simple statistical model are given the same data, the model often produces more accurate predictions.

Yet many candidates experience the technology as impersonal and alienating. And when systems scale, errors scale with them. If a solution fails to measure what it is intended to measure, lacks consistency, or contains algorithmic bias, the consequences can be significant.

Amazon’s failed recruitment tool remains the most widely cited example. A model trained on historical data from a male-dominated industry learned patterns that systematically favored men.

Standardization, in other words, is not the same as fairness.

Manipulation and cheating further complicate the picture. In 2024, fewer than three percent of candidates reportedly used AI assistance during the actual assessment process itself. By 2025, that figure had risen to 23 percent.

That means nearly one in four candidates now uses AI not only for preparation, but also during digital interviews and psychometric testing. The methods are varied — and increasingly sophisticated:

- White text manipulation: Invisible keywords embedded in CVs that are detected by algorithms but remain unseen by human reviewers.

- Prompt injection: Hidden commands embedded in text that manipulate systems into ranking candidates more highly.

- Real-time assistance: Tools that artificially adjust eye contact during video interviews, allowing candidates to read scripts from another screen unnoticed, or receive answer suggestions through hidden earpieces.

- Digital overlays: Advanced solutions that project answers to psychometric tests directly onto the screen in real time, bypassing control mechanisms entirely.

Not all assessments are equally vulnerable. Some newer platforms, such as Fairsight, are specifically designed to make AI-assisted manipulation more difficult. Candidates must construct responses themselves rather than selecting predefined alternatives. In addition, visual mechanisms — such as complex color combinations — are used in ways that humans interpret instantly, but which language models still struggle to decode reliably.

Taken together, these developments make the need for regulation increasingly clear. AI offers undeniable advantages in scalability and standardization, but it also introduces new risks related to bias and the erosion of human interaction. Under the EU AI Act, the use of AI in recruitment is classified as high-risk — and for good reason.

Technology can improve decision support, but it should not independently decide who gets hired.

At the same time, a genuine dilemma remains. If the requirement for human oversight simply means that hiring managers once again override processes based on instinct and gut feeling, we risk reintroducing precisely the noise and bias that standardization was intended to reduce.

The future of recruitment should be neither fully automated nor nostalgically human-driven. AI should be used where it makes evaluations more efficient, consistent, and accurate. But people must own the process, ensure quality control, and remain accessible to candidates. Applicants should encounter human beings — not disappear into a black hole of automated responses.

Only then does technology become genuine progress, rather than merely a more efficient way of repeating old mistakes.

February 2025

* Papillon has joined Kennedy International

–A global network of independent executive search boutiques.

Our network is now extended with partner offices in Europe, Asia, North America and South America. All the owners of the Kennedy partner firms have at least 10 years experience in retained search under their respective market. We all adhere to the highest business ethics and professional standards. Clients can be assured that the Kennedy affiliation guarantees a superior approach, execution and experience to our clients and candidates as well as our stakeholders. With Papillon Executive Search joining Kennedy you can still expect the best in the business – now also internationally.

January 2025

* Kjell I. Johnsen has joined Papillon Executive Search

– as Senior Advisor International,

With a long and diverse career as an executive, director, and leader, he’s worked across the globe for multinational companies. For the past 20 years, he’s been dedicated to helping Norwegian and international clients find the right talent, recruiting for roles in nearly every industry and function you can think of.

With a long and diverse career as an executive, director, and leader, he’s worked across the globe for multinational companies. For the past 20 years, he’s been dedicated to helping Norwegian and international clients find the right talent, recruiting for roles in nearly every industry and function you can think of.